The limiting factor is that the size of the lookup table (LUT) tends to increase dramatically.

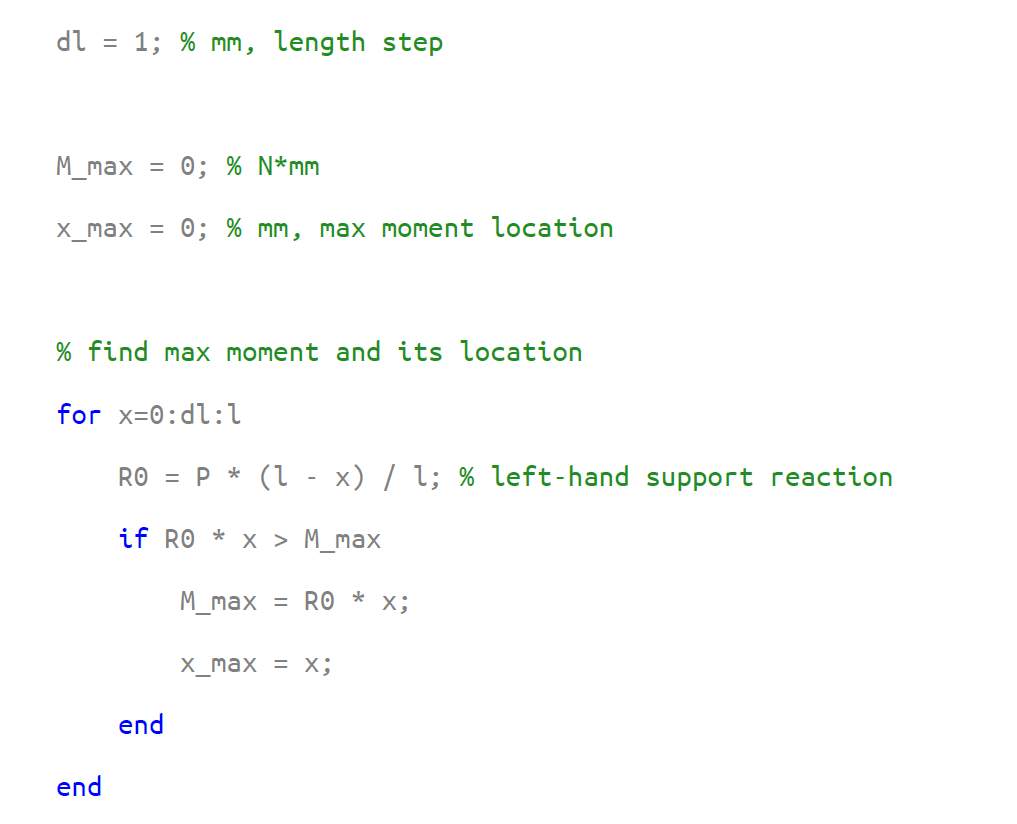

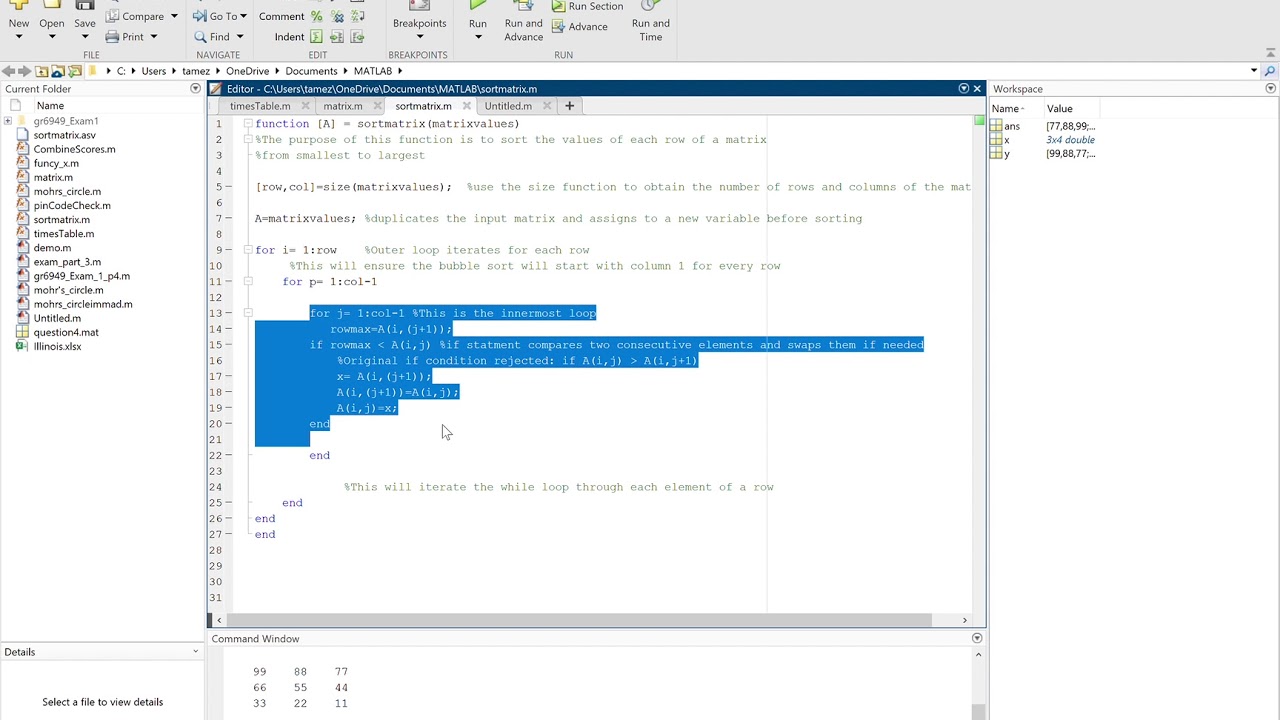

This approach should be considered when the combinational logic is not amenable to pipelining, for example because it is implemented as table lookup in a ROM. In other terms, the recursive computation is carried out at a lower pace but on wider data words. The idea is to process two or more samples in each recursive step so that an integer multiple of the sampling interval becomes available for carrying out the necessary computations. One technique, referred to as expansion or look-ahead, is closely related to aggregate computation. More methods for speeding up general time-variant first-order feedback loops are examined in. (3.72) t l p f ″ = max ( t l p f 1 ″, t l p f 2 ″ ) < t l p f In summary, architecture, performance, and cost figures resemble those found for linear computations. Even more speedup can be obtained from higher unfolding degrees, the price to pay is multiplied circuit size and extra latency, though. The computation so becomes amenable to pipelining and retiming, see fig.3.39f, which cuts the longest path in half when compared to the original architecture of fig.3.39b. Following an associativity transform the DDG is redrawn as shown in fig.3.39e. Assume function f is known to be associative. Yet, the unfolded recursion can serve as a starting point for more useful reorganizations. DDG reorganized for an associative function f (e), pertaining architecture after pipelining and retiming (f), DDG with the two functional blocks for f combined into f ' (g), pertaining architecture after pipelining and retiming (h). Original DDG (a) and isomorphic architecture (b), DDG after unfolding by a factor of p = 2 (c), same DDG with retiming added on top (d). Architectural alternatives for nonlinear time-variant first-order feedback loops. Performance and cost analysisįigure 3.39. While performance and power data are obsolete today, loop unfolding allows to push out the need for fast but costly fabrication technologies such as GaAs, then and now. At full speed the chip dissipates 2.2 W from a 5 V supply. 56 The authors write that one to two extra data bits had to be added in the unfolded datapath to maintain similar roundoff and quantization characteristics as in the initial configuration.

Overall computation rate roughly is 1.5 Gop/s. The circuit, fabricated in a 0.9 μm CMOS technology, has been measured to run at a clock frequency of 85 MHz and spits out one sample per clock cycle, so Γ = 1. Pipelined multiply-add units have been designed as combinations of consecutive carry-save and carry-ripple adders. DDG after unfolding by a factor of p = 4 (a) and high-performance architecture with pipelining and retiming on top (b).Ī high-speed fourth-order ARMA 55 filter chip that includes two sections similar to fig.3.37b has been reported back in 1992. Linear time-invariant second-order feedback loop. The idea of loop unfolding demonstrated on a linear time-invariant first-order recursion can be extended into various directions, and this is the subject of the forthcoming three subsections. More on the positive side, the shortened longest path may bring many recursive computations into the reach of a relatively slow but power-efficient technology or may allow for a lower supply voltage. Loop unfolding greatly inflates the amount of energy dissipated in the processing of one data item because of the extra feedforward computations and the many latency registers added to the unfolded circuitry. Now, the larger number of roundoff operations that participate in the unfolded loop with respect to the initial configuration leads to more quantization errors, a handicap which must be offset by using somewhat wider data words. In the above example, for instance, addition would typically handle only part of the bits that emanate from multiplication. For the sake of economy, datapaths are designed to do with minimum acceptable word widths, which implies that output and intermediate results get rounded or truncated somewhere in the process. In return for an almost fourfold throughput, this is finally not too bad.Īnalogously to what was found for pipelining in section 3.4.3, the speedup of loop unfolding tends to diminish while the difficulties of balancing delays within the combinational logic tend to grow when unfolding is pushed too far, p » 1.Ī hidden cost factor associated with loop unfolding is due to finite precision arithmetics. In the above example, we count three times the original arithmetic logic plus 14 extra (nonfunctional) registers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed